Even Easier Introduction to Video Analytics

- Data, AI & Analytics

Even Easier Introduction to Video Analytics

Probably you have heard the term Video Analytics many times around you. But when it comes to know more about it, you would probably end up falling into a framework promotion blog or service provider’s space. So, let’s understand only video analytics, and dive into it’s components in this blog. Roll up your sleeves, grab your coffee mug and let’s get started.

To understand Video Analytics, let’s first understand what analytics actually means to anything.

What is Analytics ?

Analytics is the process of discovering, interpreting, and communicating significant patterns in data. Quite simply, analytics helps us see insights and meaningful data that we might not otherwise detect. Like business analytics focuses on using insights derived from data to make more informed decisions. That will help organisations to increase sales, reduce costs, and make other business improvements. Interesting, right !!

Video Analytics

Video analytics is a technology that processes a digital video signal using AI and Computer Vision powered algorithms for finding the meaningful insights out of it. Cool !

Let’s break it, suppose you wanna find how many people entered in a mall. Then running person counter on the camera hinged over the mall’s entry can really give you this number. Similarly, vehicle count and classification over tolls and highways can really give meaningful insights to a highway authority. What’s say ?

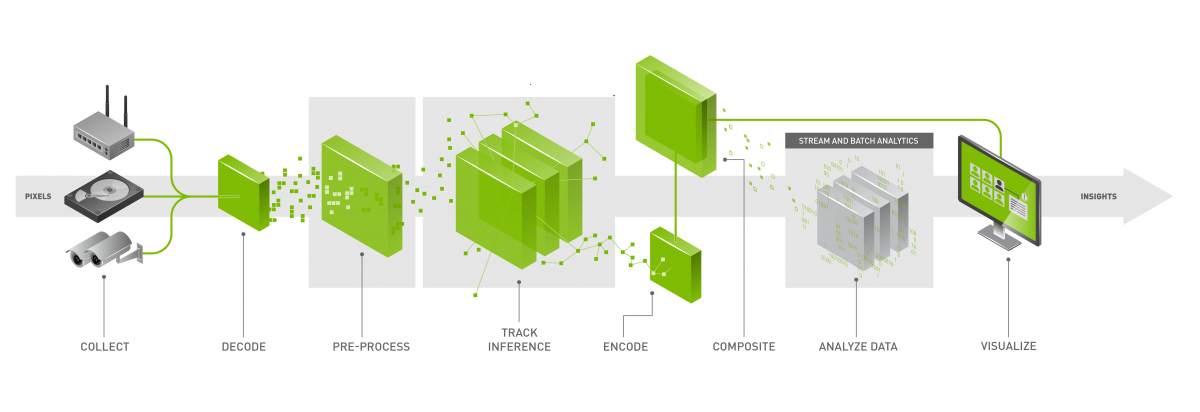

Hope, now you are pretty cleared with the term “video analytics”. To develop large scale video analytics software, we need to break the whole system into more than one components. These components need to be attached one by one to make an end-to-end video analytics pipeline.

Let’s move ahead and learn more about these components –

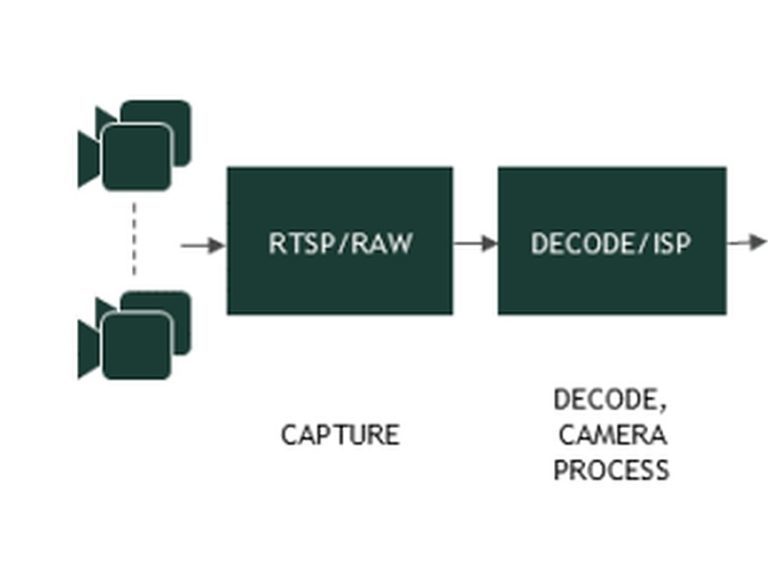

Source

In video analytics pipeline, the edge point of the video signals are called source. There can be different types of sources, a standard video analytics pipeline can have. More often you will find recorded videos files( .mp4, .h264/.h265), network stream( rtsp stream ) or an integrated ( or usb ) camera feed etc.

Decoder

We just learnt what source is. Now these sources transfer the signals in encoded frames. To use these frames, we need to perform decoding. Hence we need decoder to decode these frames. So that it’s pixel information (generally a tensor) can be easily used easily in the pipeline.

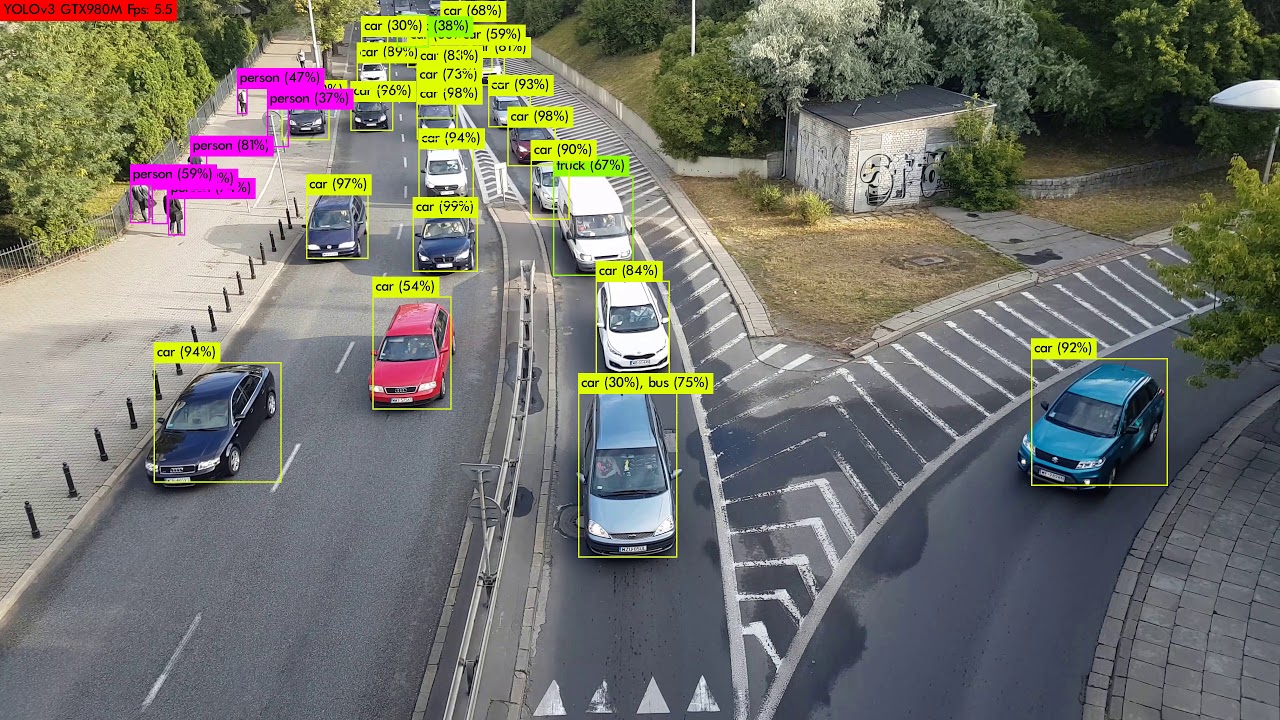

Inference Engine

Inference engines are generally a deep learning computer vision models. These engines can be of two types, either object detection or image segmentation. Inference engine needs a forward pass of a frame (or a tensor) and it produces many meaningful information such as classification and localisation of detected objects, segmentation results etc. These information can be used further for extracting insights.

let’s say we detected vehicles in a frame now this information can be used in vehicle tracking, vehicle counting etc.

Custom Logic for Analytics

Now we have detected/segmented objects in the frame, but do we just need boundary-box coordinates, object class and confidence ? Obviously not ! So it’s time to perform analytics. We can use object trackers, direction detection, object counter, roi filtering, overcrowding detection, object cropping etc in this component. All these operation can together yield a great insight that could not be possible only by looking multiple streams at a time.

Meta Data conversion

From the previous component, we got many meaningful information about the suspected objects. But this information should be stored and transmitted in a structured manner. Isn’t it ? In this component we structure object and frame meta data. And then this can be used further to build analytics dashboards, building powerful visualisation outputs and generate alerts.

These were all the components of a general video analytics pipeline. I hope, you found this blog helpful. Feel free to ask any questions in the comment section below. Till the time … enjoy video analytics 🙂